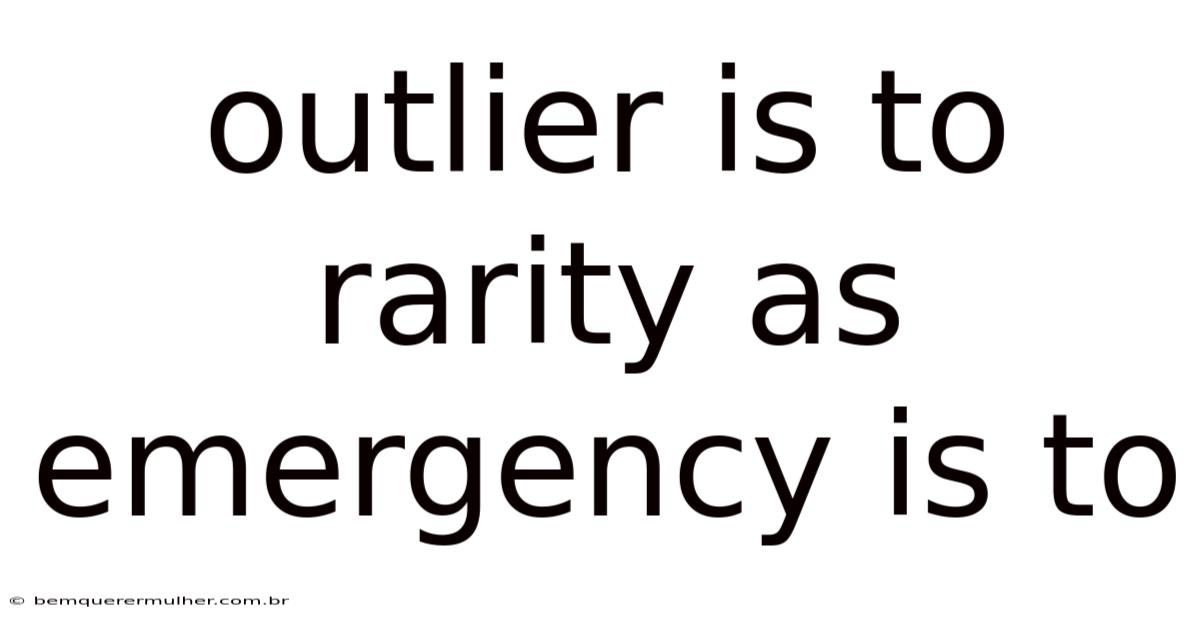

The concept of outliers often elicits curiosity, prompting individuals to question their significance within broader datasets. Worth adding: in statistical terms, an outlier represents a data point that deviates markedly from the predominant pattern, standing out in a manner that challenges conventional expectations. That's why this deviation can arise from various sources, including measurement errors, rare events, or simply the inherent variability of natural phenomena. While outliers may seem like anomalies, their presence often holds profound implications for understanding the underlying structure of the data they inhabit. Because of that, yet, their relationship to other categories of data, such as emergencies, invites further exploration. One intriguing analogy emerges when considering how emergencies, though transient and critical, share a parallel with outliers in terms of their impact on outcomes. Both categories demand careful scrutiny, yet they serve distinct roles within their respective domains. This article digs into the nuances of outliers, examining their role in statistical analysis, their relevance to real-world scenarios, and how they intersect with other factors like emergencies. Through this lens, we uncover how the interplay between rarity and frequency shapes our interpretation of data, offering insights that can influence decision-making processes across disciplines. Consider this: the following sections will unpack these themes in detail, providing a comprehensive perspective that bridges statistical theory with practical application. By examining the parallels between outliers and emergencies, we reveal how both serve as critical indicators that warrant attention, albeit through different lenses. Understanding this connection enriches our ability to work through complex datasets, ensuring that we do not overlook the nuances that could otherwise distort conclusions. The following discussion will explore the mechanisms behind outlier detection, their implications for analysis, and how their comparison to emergencies enriches our approach to data interpretation The details matter here..

The official docs gloss over this. That's a mistake.

Understanding Outliers: Defining the Concept

Outliers represent instances that deviate significantly from the consensus of a dataset, often representing extremes or rare occurrences. These points may stem from several causes: data entry errors, measurement inaccuracies, or unique phenomena that warrant special attention. Here's a good example: a sudden spike in sales for a product that has never been popular before constitutes an outlier in retail analytics. Conversely, an outlier might also signify a key event, such as a natural disaster affecting a region’s infrastructure. Identifying outliers requires a balance between precision and sensitivity, as misclassification can lead to flawed conclusions. Statistical methods like z-scores, interquartile ranges, and visual tools such as box plots are commonly employed to detect these anomalies. Still, the challenge lies in contextualizing these findings—an outlier that appears statistically significant might hold little practical value, while one that seems trivial could hold substantial importance. This duality underscores the need for domain expertise when interpreting outliers, ensuring that their detection aligns with the specific context in which the data is being analyzed. Adding to this, the act of identifying outliers is not merely an analytical task but also a strategic one, often guiding further investigation or prompting the reevaluation of underlying assumptions. In this light, understanding outliers becomes a cornerstone of data integrity, requiring both technical skill and critical thinking to confirm that their identification does not inadvertently distort the narrative they aim to support Less friction, more output..

The Role of Emergencies in Context

Emergencies, while distinct in nature, share a peculiar relationship with outliers in their capacity to disrupt stability and demand immediate attention. Emergencies encompass situations that require urgent response, such as medical crises, natural disasters, or security threats, where timely intervention can significantly alter outcomes. These events often occur within dynamic environments where variability is inherent, yet their impact is typically profound and far-reaching. Just as outliers challenge the status quo by introducing unpredictability, emergencies

they force analysts, policymakers, and responders to re‑evaluate the assumptions that underlie routine operations. In both cases—whether an anomalous data point or a real‑world crisis—the signal is that something has moved outside the realm of expected behavior, and the response must be calibrated accordingly.

1. Detection Mechanisms: From Algorithms to Human Judgment

| Technique | Core Principle | Strengths | Weaknesses |

|---|---|---|---|

| Z‑Score / Standard Deviation | Distance from mean in units of σ | Simple, fast, works for roughly normal data | Sensitive to non‑normal distributions; outliers can inflate σ |

| Interquartile Range (IQR) | Points beyond 1.5 × IQR from Q1/Q3 | strong to skewed data | May miss extreme tail events |

| Isolation Forest | Randomly partition data; outliers require fewer splits | Handles high‑dimensional data, non‑linear patterns | Black‑box nature; hyper‑parameter tuning needed |

| Local Outlier Factor (LOF) | Density‑based; compares local density to neighbors | Detects contextual outliers | Computationally intensive for large datasets |

| Domain‑Specific Rules | Expert‑crafted thresholds (e.g. |

While these algorithms provide a quantitative baseline, the final determination often hinges on human expertise. Think about it: an analyst familiar with seasonal sales cycles may recognize a sudden surge as a product launch rather than a data error. Similarly, emergency managers rely on situational awareness—weather forecasts, infrastructure status, social media chatter—to decide whether an outlier in sensor readings truly signals a crisis.

2. Implications for Analysis

-

Model Robustness – Outliers can heavily bias regression coefficients, inflate variance, and degrade predictive performance. Techniques such as reliable regression (e.g., Huber loss) or trimmed estimators mitigate this risk. In emergency modeling, robustness translates to more reliable forecasts of resource needs during atypical demand spikes.

-

Risk Assessment – In finance, an outlier loss event may redefine Value‑at‑Risk (VaR) calculations, prompting tighter capital buffers. In public health, an outlier in infection counts can trigger early‑warning systems, prompting pre‑emptive containment measures.

-

Decision‑Making Speed – Automated outlier detectors can flag anomalies in real time, akin to early warning sirens for emergencies. Even so, false positives can cause “alert fatigue,” just as unnecessary evacuations waste resources. Balancing sensitivity (true‑positive rate) and specificity (true‑negative rate) is therefore essential Simple as that..

3. Comparative Lens: What Outliers Teach Us About Emergencies

| Aspect | Outliers (Data) | Emergencies (Real‑World) |

|---|---|---|

| Origin | Measurement error, rare phenomenon, systemic shift | Natural disaster, accident, malicious act |

| Detection | Statistical thresholds, machine‑learning models | Sensors, reports, media, citizen alerts |

| Response | Data cleaning, model retraining, deeper investigation | Mobilize responders, allocate resources, issue warnings |

| Temporal Scale | Often instantaneous (single record) | Can evolve over minutes to weeks |

| Stakeholder Impact | Analysts, downstream models, business decisions | Public safety officials, communities, economies |

Viewing emergencies through the lens of outlier theory encourages a data‑centric preparedness mindset: treat each sensor reading, social‑media post, or logistical metric as a potential indicator of deviation, and embed automated anomaly detection into the operational workflow. Conversely, treating outliers as “emergencies in data” reminds analysts to allocate investigative resources proportionally, rather than dismissing every deviation as noise.

No fluff here — just what actually works.

4. Practical Workflow: Integrating Outlier‑Emergency Thinking

- Ingestion & Pre‑Processing – Apply basic sanity checks (range limits, missing‑value handling). Flag records that fail these checks as candidate outliers.

- Automated Screening – Run a suite of detectors (e.g., IQR + Isolation Forest). Assign each flagged point a risk score based on detector consensus and magnitude of deviation.

- Contextual Enrichment – Pull auxiliary data (weather, calendar events, supply‑chain alerts). A high‑risk score that coincides with a known storm, for instance, upgrades the flag to an emergency level.

- Human Review Loop – Present a curated dashboard to domain experts. Allow them to confirm, reject, or annotate each case. Their feedback feeds back into model retraining (active learning).

- Action & Documentation – For confirmed emergencies, trigger SOPs (e.g., dispatch field teams, issue public alerts). For pure data outliers, log corrective actions (correction, exclusion, or retention with annotation).

- Post‑Event Analysis – Conduct root‑cause analysis to refine detection thresholds, improve sensor placement, or adjust business processes.

5. Ethical and Governance Considerations

- Bias Propagation – If detection thresholds are calibrated on historical data that under‑represents certain groups (e.g., low‑income neighborhoods), outliers affecting those groups may be overlooked, leading to inequitable emergency response.

- Privacy – Real‑time monitoring of social media for anomaly detection must respect user consent and data‑protection regulations (GDPR, CCPA).

- Transparency – Stakeholders should understand why a particular data point was flagged. Explainable AI techniques (e.g., SHAP values for Isolation Forest) can illuminate the reasoning behind a high risk score.

- Accountability – Document decision logs for each flagged event, enabling audits and post‑mortems. This practice mirrors the chain‑of‑custody standards used in emergency services.

Concluding Thoughts

Outliers and emergencies occupy opposite ends of the analytical spectrum—one lives in the abstract realm of numbers, the other unfolds in the physical world—but they share a fundamental characteristic: each signals a departure from the expected baseline that demands attention. By treating outliers not merely as statistical nuisances but as potential early warnings, analysts can adopt a proactive stance that mirrors emergency management practices. Conversely, framing emergencies as “real‑world outliers” encourages responders to make use of data‑driven tools, from sensor networks to anomaly‑detection algorithms, to spot the faintest tremors before they become catastrophes.

The synthesis of these perspectives yields a strong, iterative workflow where algorithms surface candidates, domain experts provide context, and organizational protocols translate insights into action. This loop enhances both data integrity and societal resilience, ensuring that rare events—whether a rogue data entry or a sudden flood—are identified, understood, and addressed with the right blend of speed, precision, and empathy Simple, but easy to overlook..

In the final analysis, the true power of outlier detection lies not in the isolated identification of odd numbers, but in the strategic alignment of statistical insight with real‑world response. When the two domains inform each other, we move from reactive firefighting to anticipatory stewardship—turning what once appeared as an aberration into a catalyst for smarter, safer, and more informed decision‑making.